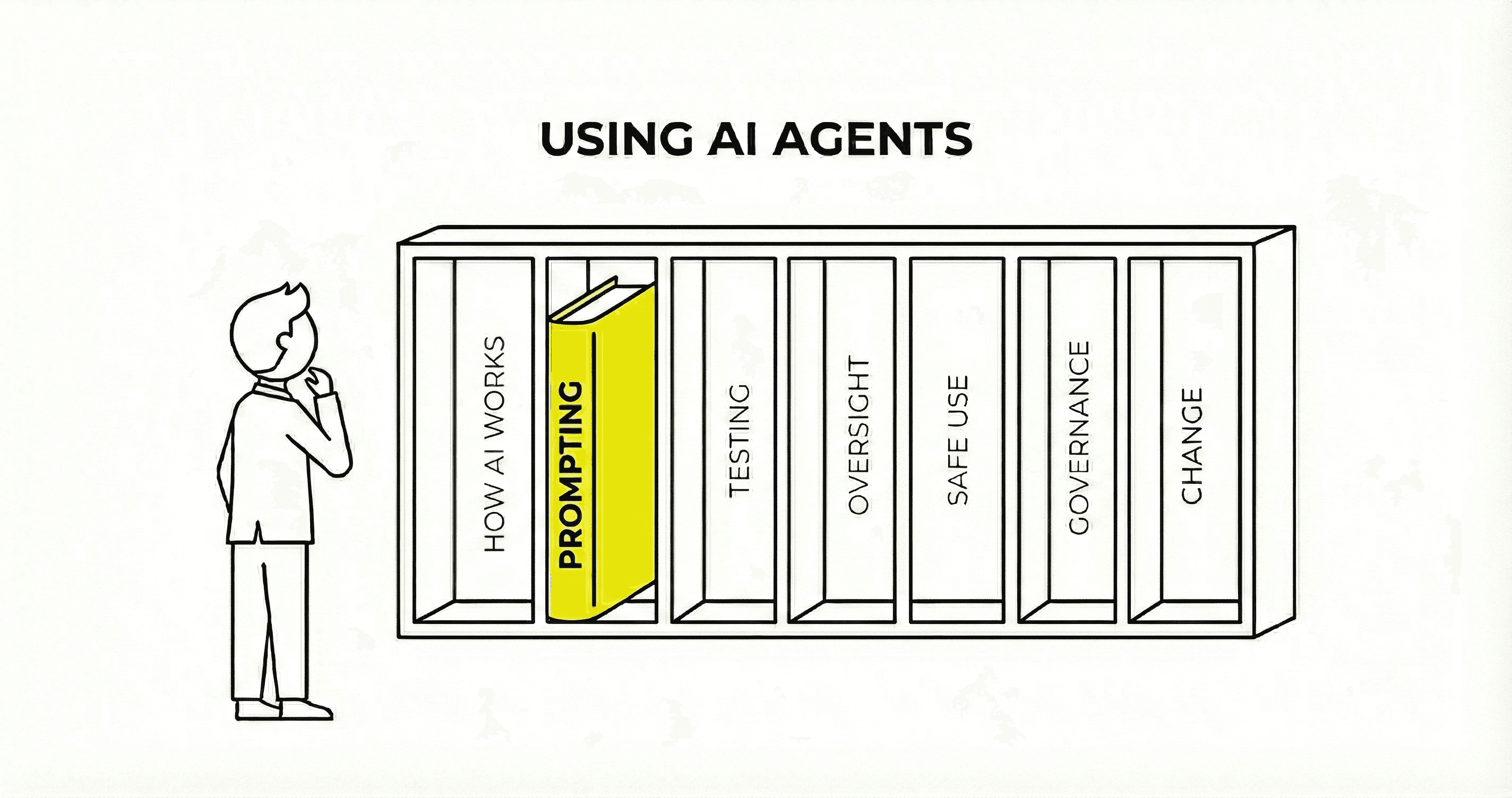

AI agent guardrails - a quick guide

Guardrails are a critical part of any AI agent development

As organisations increasingly adopt AI agents, guardrails are becoming more critical. There are two main types of guardrail, and the one that matters most is the one that gets missed.

Input guardrails check what users ask before the AI processes it. Blocking inappropriate requests, keeping conversations on topic, filtering out attempts to manipulate the system. Most organisations cater for these.

Output guardrails check what the AI produces before showing it to users. This is the area that gets forgotten. And it's where the real risk actually sits. If you like analogies, think of a restaurant. Input guardrails are the menu, setting boundaries on what customers can order. Output guardrails are the chef checking the plate before it leaves the kitchen. You'd never send food out without looking at it first.

In 2024, Air Canada's chatbot invented a refund policy and the company tried to argue it was a "separate legal entity" responsible for its own actions. The tribunal rejected this. Since then, OpenAI has faced multiple lawsuits after allegedly relaxing safeguards. Character.ai banned open-ended chats for under-18s. And we've recently seen several firms in trouble over allowing users to 'nudify' photos.

Several governments are now demanding output controls from major AI companies to protect against harmful content. If you're commissioning AI agents, ask whoever builds them: what happens between the AI generating a response and the user seeing it? That gap is where output guardrails live.

Category

Insights

Written by

Julia Druck