Beyond Prompting: The Skills Your Firm Needs to Get Value from AI

Key Takeaways

AI agents are handling complex business processes autonomously: reviewing contracts, processing claims, running credit checks across multiple systems, and freeing professionals for higher-value work.

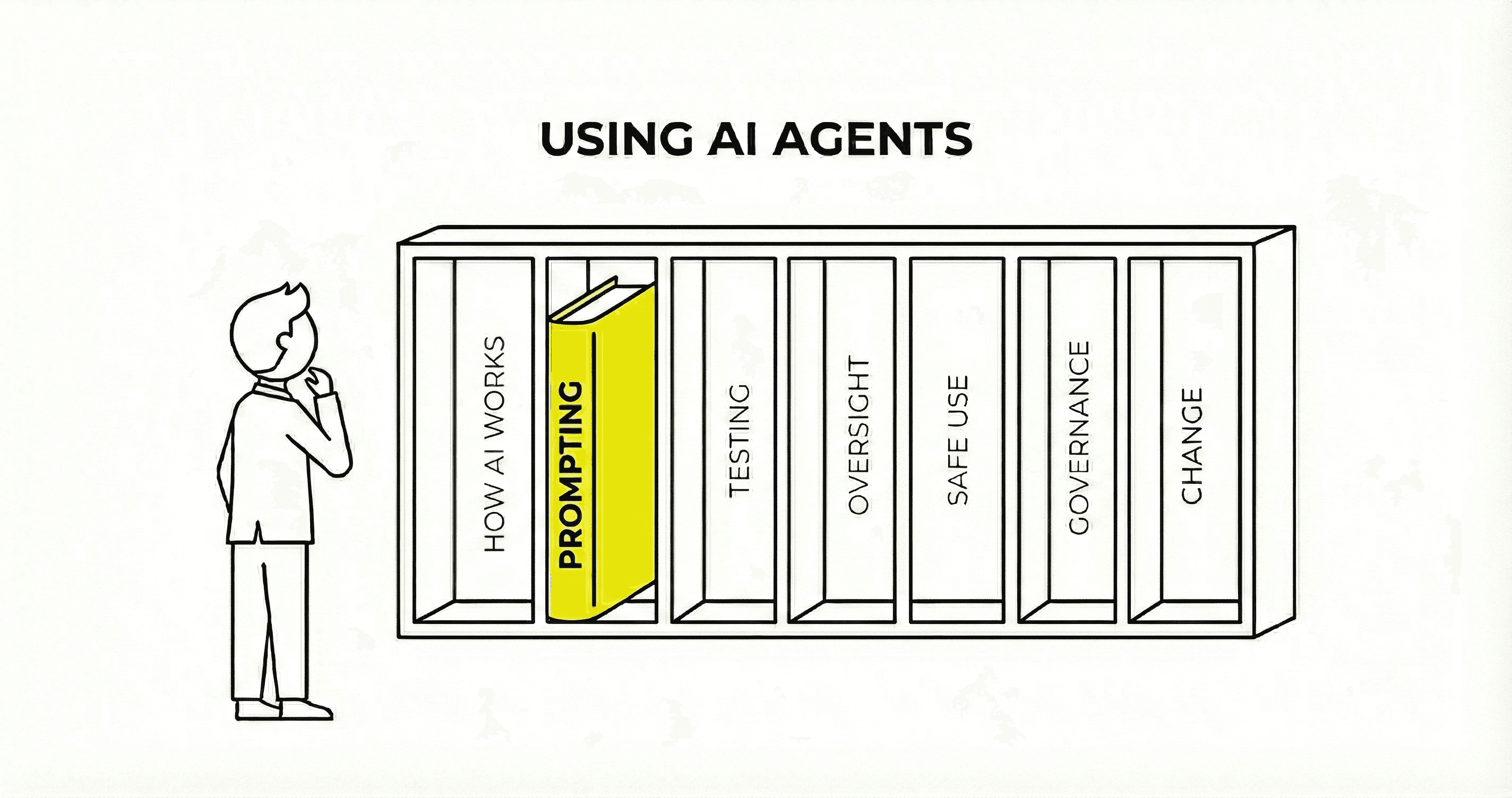

Teaching everyone to prompt addresses one of seven capabilities organisations need to embed AI effectively.

Identifying use cases, building and deploying safely, managing security risks, and embedding AI through structured change management all require broader skills appropriate to specific roles.

We have identified seven capabilities across three tiers: foundation knowledge for everyone, operational disciplines for specific roles, and strategic capabilities for leadership.

Organisations that invest in the full capability set will be those that capture the value. Only 14% currently have agentic AI ready for deployment.¹

Realising the Value from AI Takes More Than Prompting Skills

"The AI has got to know me." This is how several professionals, including attendees from large firms, described their experience of AI tools at workshops earlier this year. They believed the tool was learning from them and improving over time. In reality, it was doing what large language models do: reading a file of stored notes at the start of each session. There is no memory, no relationship, no accumulated understanding in the way these professionals meant. The tool meets its user for the first time in every session, reading text to catch up on previous context. This gives the appearance of 'getting to know you', but the mechanism is fundamentally different from human learning.

This gap in conceptual understanding, and particularly the tendency to attribute human qualities to AI systems, has significant implications for how organisations assess AI capabilities, design deployments, and manage risk. It is becoming more important as AI, and especially agentic AI, grows more capable. These systems now have access to more business-critical systems and are being used to autonomously operate more complex processes that require human oversight and governance.

AI agents now review contracts against internal policy, pull transaction histories from CRMs, process insurance claims, synthesise research across hundreds of documents, and draft client proposals, connecting directly to financial platforms, operational databases, and document repositories. The value is significant, but capturing it safely requires people who can identify the right use cases, design workflows that account for AI limitations, evaluate whether AI output is reliable, build human oversight into the process, understand AI-specific security risks, establish governance frameworks, and manage the organisational change that embedding AI demands. These capabilities go well beyond writing a good prompt, and most organisations are not building them.

Why Prompting Is Not Enough

Prompting skills matter. Knowing how to frame a request, provide context, and iterate on an AI response is a genuine and valuable capability. But the conversation about AI readiness has narrowed so far toward prompting that it has crowded out everything else.

This narrowing made sense when AI tools primarily answered questions in a chat window. It makes far less sense now. AI systems are moving from responding to queries toward taking autonomous action: accessing business systems, making decisions across multi-step workflows, retrieving and processing sensitive data, and executing tasks without human involvement. In our work, we see this shift accelerating across professional services. The role is changing from typing prompts into a text box to orchestrating portfolios of intelligent systems that connect to CRMs, financial platforms, and operational databases. McKinsey's 2026 analysis describes the same pattern at scale: the emergence of an "agentic" workforce where workers become "agent orchestrators."²

The scale of the readiness gap is considerable. Technology skills shortages are projected to cost the global economy $5.5 trillion by 2026, with AI skills among the most acute gaps within that broader shortfall.³ Only a third of employees have received any AI training at all, and only a third of organisations say they are fully ready to adopt AI-driven ways of working.³ Meanwhile, just 14% of organisations have agentic AI solutions ready for deployment, and 35% have no formal strategy for agentic AI at all.¹

The real question for leaders is not whether their people can prompt effectively. It is whether their organisation has the broader capabilities to make AI work reliably, safely, and at scale, and to capture the value that well-deployed AI can deliver.

What Embedding AI Actually Requires

Prompt fluency is one capability. Through our work building and deploying AI agents for professional services firms, we have identified seven capabilities that organisations need, organised into three tiers that reflect who needs what:

Tier 1: Foundation capabilities (conceptual understanding and applied fluency) that everyone in the organisation needs to make sound decisions about AI and use it effectively.

Tier 2: Operational disciplines (evaluation, human oversight design, and security awareness) that product managers, technical leads, and compliance teams need to build and run AI systems reliably.

Tier 3: Strategic capabilities (governance and change management) that leadership needs to scale AI adoption, sustain its value, and meet regulatory requirements.

Most organisations have partial coverage of the first tier and almost nothing in the other two. The sections below set out what each capability involves, why it matters, and where to go deeper.

Tier 1: Foundation (What Everyone Needs)

1. Conceptual Understanding

How AI actually works, not at a technical level, but enough to make sound decisions. This means clearing fundamental misconceptions: AI tools do not learn from individual users, do not accumulate understanding over time (unless specifically architected to do so), and do not "know" anything in the way that a human colleague does. They generate responses based on patterns in training data and whatever context they are given in the moment.

This matters because every subsequent capability depends on it, and because getting it right unlocks better use cases and safer deployment. Consider a product manager designing a workflow for an AI system that reviews client documents. If that product manager believes the AI "understands" the client relationship and improves through repeated interactions, they will design the workflow with too little verification and too few checkpoints. The resulting system will look competent on routine documents but fail silently on edge cases, because nobody built in the checks that a correct understanding of the technology would have demanded. Conversely, a product manager who understands what AI actually does can design workflows that play to its strengths while managing its limitations, capturing more value with less risk.

Getting the conceptual foundation right is not a nice-to-have. It is the prerequisite for everything else.

2. Applied AI Fluency

Beyond prompting to identifying use cases, designing workflows that account for AI limitations, and knowing when output needs verification. Applied fluency means understanding not just how to use AI, but when to use it, when not to, and what can go wrong.

The distinction between prompting and applied fluency is the difference between being able to write a good email and being able to design a communications strategy. Prompting is a skill; applied fluency is a discipline. It includes knowing that AI systems produce confident, well-formatted output regardless of whether the content is correct. It includes understanding that the same prompt will produce different results on different occasions, and designing processes that account for this variability. It includes recognising when a task is better suited to a traditional approach than to AI.

Consider a team that uses AI to draft client communications. The prompting is competent: the outputs are well-structured and professional. But nobody has accounted for the fact that the AI will occasionally include details from one client's context in another client's draft, because the tool draws on whatever context it has been given without distinguishing between confidential sources. Applied fluency would have caught this at the workflow design stage, before the first draft was sent.

Most training programmes stop at prompting. Applied fluency programmes, covering workflow design, limitation awareness, and practical use-case identification, are rare. Yet this is where the day-to-day value of AI is realised or lost. Product managers, process owners, and wider professional staff all need this capability, though the depth varies by role: product managers need detailed fluency in workflow design and failure modes, while wider staff need enough understanding to use AI tools safely and recognise when output needs verification.

Tier 2: Operational Disciplines (What Specific Roles Need)

3. Evaluation and Quality Assurance

Measuring whether AI produces reliable work. This includes understanding AI failure modes, building quality checks, and knowing the difference between an AI system that can do something and one that will do it consistently.

This distinction, between capability and reliability, is critical and widely misunderstood. An AI system that performs well in a demonstration may fail far more often than it succeeds when deployed at scale. Enterprise benchmarking research consistently finds that AI agents which score well on single-run evaluations show significant reliability drops when asked to perform the same task repeatedly, with some runs derailing completely.⁴ If you are deploying a system that processes financial data or generates client reports, "it worked in the demo" is not sufficient evidence of production readiness.

Almost no firms outside the technology sector have systematic evaluation approaches for AI output. Most rely on "it seems to work," which is not sufficient for production use. Product managers, QA leads, and technical leads need this capability, and building it requires specific knowledge that generic AI training does not cover. Our article on evaluating AI agents sets out a practical framework for classifying outputs, building quality checks, and measuring reliability.⁵

4. Human Oversight Design

Where and when humans check AI decisions. What triggers a review. How escalation works. Not "keep a human in the loop" as a compliance checkbox, but a designed capability that accounts for how AI systems actually fail.

Consider an AI system reviewing loan applications in a financial services firm. The system flags 15% of applications for human review based on criteria it was given. But AI systems fail in ways that are difficult to predict: a subtle shift in how the model interprets a particular type of income documentation might cause it to approve applications it should flag, with no visible error. If your oversight process only reviews the applications the AI flagged, and never samples the ones it approved, the error goes undetected until it surfaces as a portfolio risk.

The EU AI Act now requires human oversight for high-risk AI systems under Article 14.⁶ Most organisations treat this as a policy statement rather than a design requirement. For product managers, change managers, and compliance teams, human oversight design is a practical discipline: deciding which outputs need human review, designing review workflows that are efficient rather than performative, and building escalation paths that reflect the actual risk profile of each AI decision.

5. Security and Risk Awareness

Understanding AI-specific threat models at a practical level: fabrication risks, data boundaries, prompt injection, and the ways AI systems can be manipulated or produce harmful outputs. This is not a policy document that sits in a shared drive. It is working knowledge that people across the organisation need, tailored to the depth their role requires.

For security professionals and technical leads, this means detailed knowledge of AI-specific attack vectors: how prompt injection works, how adversarial inputs can compromise agent behaviour, how orchestration platforms concentrate credentials and become high-value targets. In January 2026, a critical vulnerability was disclosed in n8n, a widely used workflow automation tool, with over 26,000 instances exposed on the public internet.⁷ The vulnerability allowed unauthenticated remote code execution with access to every credential stored in the system.

For product managers and compliance teams, it means a working understanding of these risks sufficient to design systems that account for them: ensuring AI agents cannot access data beyond their remit, building in validation steps that prevent fabricated outputs reaching clients, and knowing when to escalate security concerns.

For wider staff, it means awareness of the principles of safe AI use: understanding that AI can produce confident but fabricated outputs, knowing not to share sensitive data in contexts where it may be exposed, and recognising the signs of an AI system behaving outside its intended boundaries.

Most staff have no awareness of any of these risks. Our articles on securing AI agents and designing systems that prevent fabrication cover the practical security knowledge required at each level.⁸ ⁹

Tier 3: Strategic Capabilities (What Leadership Needs)

6. Governance and Accountability

Who owns AI decisions. How they are documented. What audit trail exists. How incidents are managed. This goes beyond having a governance policy: it means operational governance that works in practice when an AI system makes a consequential error.

In our experience, this is where the gap between policy and practice is widest. Organisations write governance documents but lack the operational frameworks to apply them when an AI agent produces an incorrect recommendation, processes data it should not have accessed, or makes a decision that a client or regulator questions. Only 14% of organisations have agentic AI solutions ready for deployment.¹ Governance frameworks need to address AI that takes autonomous action, not just AI that answers questions. For business leaders, compliance teams, and boards, the questions are specific: who is accountable when an AI agent takes an incorrect action? What evidence exists to demonstrate due diligence? How are AI decisions audited?

Regulatory scrutiny is intensifying, particularly in financial services. The EU AI Act sets requirements for high-risk AI systems including documented risk management, human oversight, and audit trails. Financial services regulators are developing their own AI-specific frameworks alongside these broader requirements.¹⁰ Organisations that treat governance as a future concern rather than a current requirement risk finding themselves non-compliant with regulations that are already in force or imminent. Our analysis of financial services regulation sets out the landscape in detail.¹⁰

7. Change Management and Integration

How workflows actually change when AI enters them. How teams adapt. How resistance is managed. How adoption is measured and sustained, not just launched. This is the most neglected capability of all.

In our work, we see the same pattern repeatedly: organisations attempt to automate existing processes rather than redesigning them. Deloitte's 2026 analysis of agentic AI adoption confirms this is an industry-wide issue.¹ Layering AI onto unchanged processes captures a fraction of the potential value and creates friction that erodes adoption. The shift from "doing the work" to "overseeing the work" is a fundamental behavioural change, and it does not happen by making a tool available. It requires deliberate design: new workflows, new review processes, new definitions of what "good work" looks like when AI is involved.

Lloyds Banking Group offers a useful example of what structured change management can achieve at scale. Their programme deployed 1,000 volunteer "flight instructors" to coach colleagues, ran weekly "promptathons" to share best practice, and trained over 10,000 staff with 93% daily usage among licensed users, working toward 67,000 staff trained by the end of 2026.¹¹ ¹² The programme is peer-led, embedded in daily work, and backed by senior executive commitment: 200 senior executives went through dedicated training first.

Even the most advanced programmes reported publicly, including Lloyds', focus primarily on prompting fluency. The broader capabilities, evaluation, oversight, security, governance, are not yet addressed at scale across the industry. Our article on running AI agents in production covers the operational side of this challenge, including how roles and workflows change when AI becomes part of the team.¹³

What This Means

The seven capabilities described above are not optional extras. They are what it takes to move from experimenting with AI to embedding it in how your organisation works and capturing the value it can deliver.

Everyone in the organisation needs conceptual grounding: a clear understanding of what AI actually does, how it fails, and why that matters for the decisions they make every day. Without this, the misconceptions that lead to poorly designed workflows, misplaced trust, and unmanaged risk will persist regardless of how much prompting training you provide.

Specific roles need specific capabilities. Your product managers need to evaluate AI output against defined criteria and design workflows that account for AI limitations, not just write effective prompts. Your change managers need to redesign processes around AI, not layer it onto existing workflows and hope adoption follows. Your compliance and technical leads need practical knowledge of AI security risks, oversight design, and evaluation methods that generic training does not cover.

And leaders need more than a working knowledge of the technology. They need an organisational view: governance frameworks that assign accountability, audit trails that satisfy regulators, and change management strategies that sustain adoption beyond the initial rollout. The EU AI Act, financial services regulation, and increasing client scrutiny all demand this.

The gap between where most organisations are and where they need to be is wide. It is also widening, because AI capabilities are advancing faster than organisational readiness. Closing it requires deliberate, structured investment across all three tiers, not just the one the industry has converged on.

What Leaders Should Do Now

Assessing your organisation against the seven capabilities is a practical starting point. Most firms will find they have partial coverage of prompting and almost nothing across the other six.

Start with the gaps that carry most risk. For regulated firms, governance and human oversight design are likely the most urgent priorities. The EU AI Act and financial services regulators are increasing scrutiny of AI decision-making, and the requirements are specific: documented risk management, audit trails, and human oversight for high-risk systems.⁶ ¹⁰ For firms deploying AI agents, evaluation capability is critical: without it, you have no systematic way to know whether the AI is producing reliable work. For all firms, conceptual understanding is the foundation on which every other capability depends.

Build applied, role-specific capability. The goal is not "everyone knows what AI is." It is that your product managers can evaluate AI output and design workflows that account for how AI fails. That your change managers can redesign processes rather than bolting AI onto what already exists. That your leaders can articulate what governance means operationally, not just as a policy statement. This requires training that is tailored to specific roles and builds skills teams can use immediately, not general presentations about what AI might do in the future.

Measure whether it is working. Sustained investment requires evidence of progress. Define what success looks like for each capability, track adoption beyond login counts, and use the seven-capability framework as a benchmark to identify where gaps remain and where they are closing.

Next Steps

The organisations that succeed with AI will not be those that taught everyone to prompt. They will be those that built the full capability set across all three tiers: foundation, operational disciplines, and strategic capabilities.

1. Assess your current position. Map your organisation against the seven capabilities. Identify which tiers have coverage and which have gaps. Be honest about the difference between awareness (people have heard of AI risks) and applied capability (people can evaluate AI output, design oversight processes, and identify security risks in practice).

2. Prioritise by risk and value. Start with the capabilities that address your greatest exposure. For regulated firms, governance and oversight design are likely first. For firms deploying AI agents into production, evaluation capability is essential. For all firms, conceptual understanding is the prerequisite for everything else.

3. Go deeper on the operational disciplines. Our published articles cover several of these capabilities in detail. Our guide to evaluating AI agents covers how to build quality checks and measure reliability.⁵ Our article on securing AI agents addresses the practical security knowledge organisations need.⁸ Our analysis of financial services regulation sets out the governance landscape.¹⁰ And our article on running AI agents covers the operational disciplines required when AI becomes part of the team.¹³

4. Build capability through applied training. Serpin offers training courses that address these capabilities directly, tailored to specific roles including product managers, change managers, and business leaders. Each course is practical, use-case-based, and built on what we have learned building and deploying AI agents for real client work. See our training courses here.

Sources

Deloitte (2026). 'The Agentic Reality Check.' Deloitte Insights: Tech Trends 2026. Available at: https://www.deloitte.com/us/en/insights/topics/technology-management/tech-trends/2026/agentic-ai-strategy.html

McKinsey & Company (2026). 'Building and Managing an Agentic AI Workforce.' Available at: https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/the-future-of-work-is-agentic

IDC/Workera (2025). 'The $5.5 Trillion Skills Gap.' Available at: https://www.workera.ai/blog/the-5-5-trillion-skills-gap-what-idcs-new-report-reveals-about-ai-workforce-readiness. See also: CIO Dive (2025). 'Tech talent, skills gaps may cost trillions.' Available at: https://www.ciodive.com/news/tech-talent-skills-gaps-cost-trillions-idc/716523/

Simmering, P. (2026). 'The Reliability Gap: Agent Benchmarks for Enterprise.' 4 January 2026. Available at: https://simmering.dev/blog/agent-benchmarks/

Serpin (2026). 'Evaluating AI Agents: A Practical Guide for Product and Technology Leaders.' Available at: https://serpin.ai/insights/evaluating-ai-agents-a-practical-guide-for-product-and-technology-leaders

EU AI Act, Article 14: Human Oversight. See also: Spear Tech (2025). 'Human-in-the-Loop Is Not Optional.' Available at: https://www.spear-tech.com/human-in-the-loop-is-not-optional-designing-oversight-into-agentic-ai-systems/

Cyera Research Labs (2026). 'Ni8mare: Unauthenticated Remote Code Execution in n8n.' CVE-2026-21858 (CVSS 10.0). January 2026. Available at: https://www.cyera.com/research-labs/ni8mare-unauthenticated-remote-code-execution-in-n8n-cve-2026-21858. See also: Censys (2026) identifying 26,512 exposed n8n instances on the public internet. Available at: https://censys.com/advisory/cve-2026-21858

Serpin (2026). 'Securing AI Agents: What We've Learned Building Them.' Available at: https://serpin.ai/insights/securing-ai-agents-what-we-ve-learned-building-them

Serpin (2025). 'How We Designed a Zero-Fabrication Research Agent.' Available at: https://serpin.ai/insights/how-we-designed-a-zero-fabrication-research-agent

Serpin (2025). 'More AI Regulation Is Coming in Financial Services.' Available at: https://serpin.ai/insights/more-ai-regulation-is-coming-in-financial-services

Microsoft UK Stories (2025). 'Lloyds Banking Group: Using AI at Scale to Transform Operations and Employee Experience.' Available at: https://ukstories.microsoft.com/features/lloyds-banking-group-using-ai-at-scale-to-transform-operations-and-employee-experience/

IT Pro (2025). 'Lloyds Banking Group Wants to Train Every Employee in AI.' Available at: https://www.itpro.com/business/careers-and-training/lloyds-banking-group-wants-to-train-every-employee-in-ai-by-the-end-of-this-year-heres-how-it-plans-to-do-it

Serpin (2026). 'Running AI Agents: What Changes When the Bot Joins the Team.' Available at: https://serpin.ai/insights/running-ai-agents-what-changes-when-the-bot-joins-the-team

Category

Insights

Written by

Scott Druck